How AI is Transforming PE Portfolio Company Operations in 2026

Enterprise AI transformation is underway, but we are not yet in the era of fully autonomous operations. What we have today are emerging standards, targeted agents removing manual labor from specific workflows, and real implementations delivering real results. Full adoption—AI running entire back offices end-to-end—is not here yet.

For portfolio companies, that distinction matters enormously. 2026 and 2027 are not years to wait and watch, but to build readiness. The companies that understand what AI can do today, what it cannot do yet, and what infrastructure decisions need to be made now will be the ones best positioned when the next phases arrive.

This piece covers the full picture: how AI evolved into something that can actually work inside your systems, what the emerging technical standards mean for portco operations, what decisions PE firms need to make around data and infrastructure, and where this is all heading—with examples from real deployments.

From "show and tell" to "do": how AI learned to act

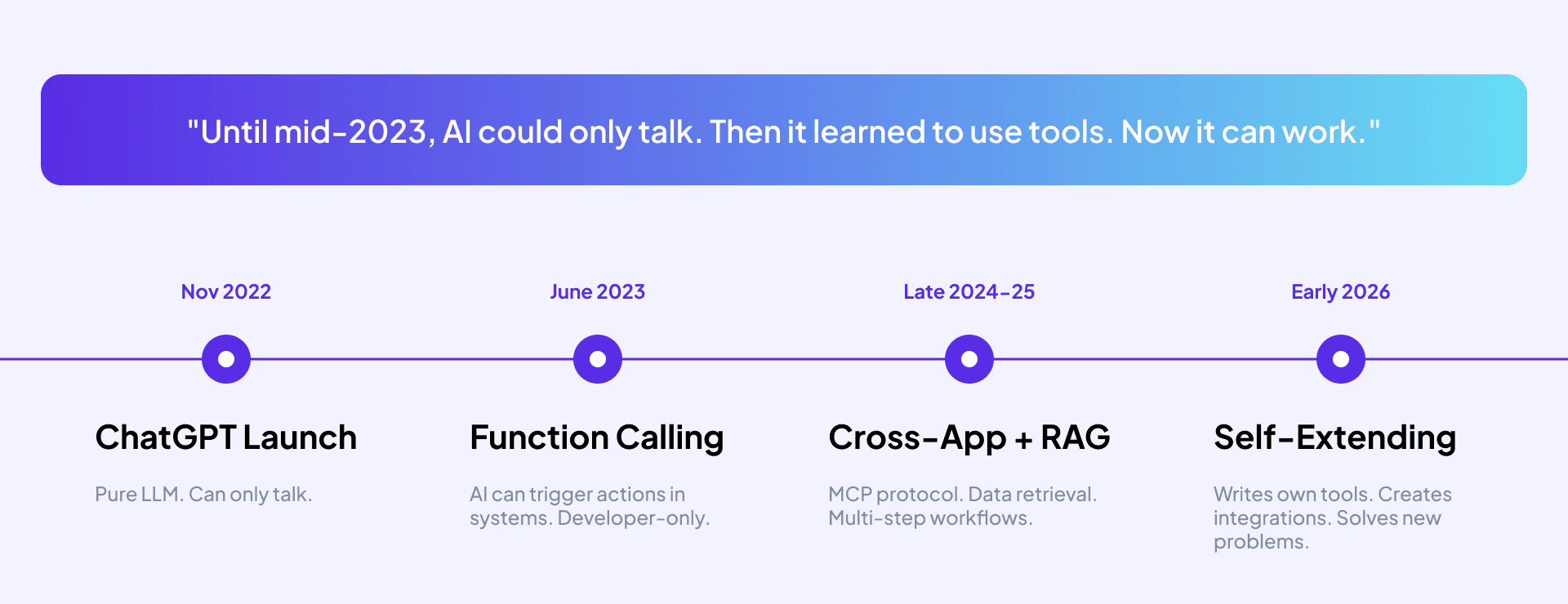

When ChatGPT launched in late 2022, it was a remarkable conversational partner. It could explain things, summarize documents, draft text. What it could not do was interact with your systems, execute actions, or operate within your actual business processes. It had no hands.

That changed in stages—not overnight.

In mid-2023, AI gained function calling capability. Think of it as an API for AI: the ability to trigger predefined actions within connected systems. Developers could now wire AI into workflows so it could actually do things, not just say things. For the first time, AI could reach out and touch a system.

Late 2024 into 2025 brought a more significant leap: cross-application connectivity, retrieval-augmented generation (RAG), and multi-step workflows. RAG in particular was a breakthrough—AI was no longer limited to its training data. It could read new context, query databases, retrieve documents, and actually learn from your operational environment in real time.

By early 2026, AI crossed another threshold: self-extension. If an AI agent encounters a problem it cannot solve with its existing tools, it can now write code to address it. It is no longer constrained to what someone programmed ahead of time.

What changed technically—and why it matters for operations

The capabilities that now make AI useful inside portco operations come down to four specific things it can do that it could not do before.

- Simulate user behavior. AI can navigate websites, click buttons, fill in forms, and search within systems—everything a person does on a screen, but faster, at scale, and without errors. Robotic process automation handled a version of this for years. AI makes it dramatically more flexible.

- Reason and decide. Traditional automation was brittle: it could only handle predefined cases. If something unexpected happened, it hit an exception and escalated to a human. AI changes this because it can evaluate options and make decisions within a defined confidence threshold. This is what makes it possible to automate processes that involve variability—which is most of them.

- Execute backend actions. When machines communicate directly, they don't need a screen. AI can reach into systems through APIs, read inventory levels, update records, and trigger workflows without ever opening a user interface. This is significantly faster and more reliable than UI-based automation.

- Write its own code. When a solution doesn't exist, AI can create it. This means automation projects that previously stalled because of integration gaps or missing connectors now have a path forward.

The emerging standards that will define what comes next

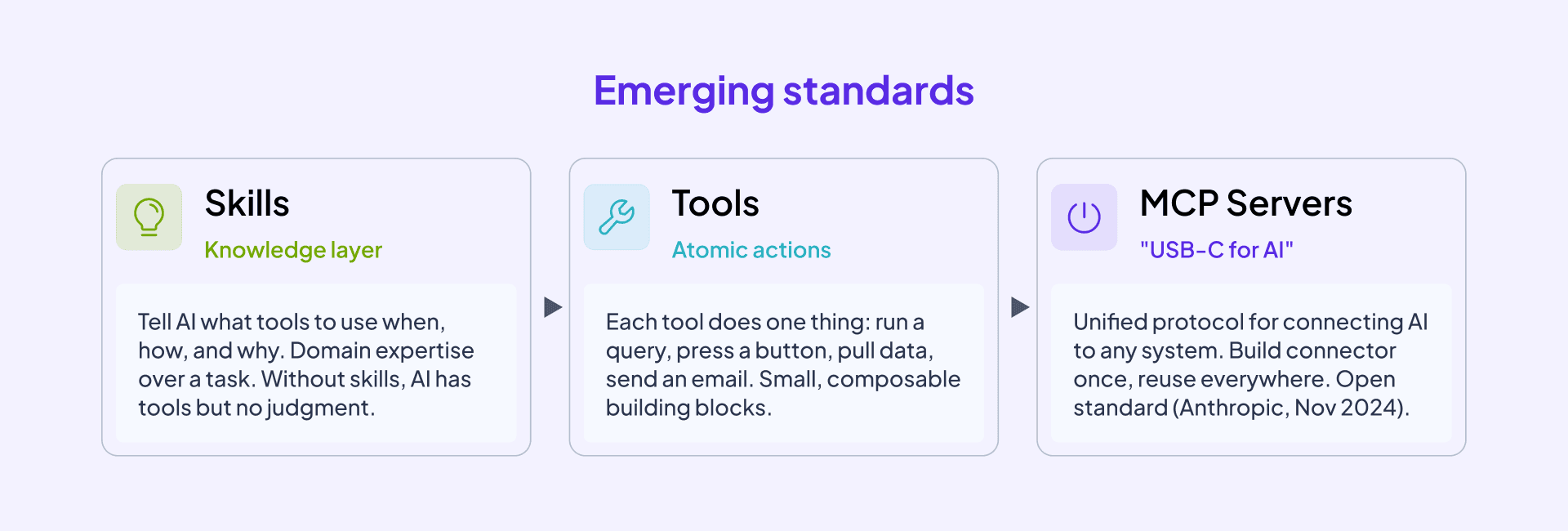

One of the biggest constraints on enterprise AI today is that there is no unified standard for connecting AI to different systems. If you want AI to interact with your ERP, your CRM, your email platform, and your reporting tools, each integration must be built separately. That's expensive, fragile, and doesn't scale.

That is changing. The Model Context Protocol (MCP), introduced by Anthropic in late 2024, is an open standard designed to solve this problem. Think of it as USB-C for AI: one connection standard that lets AI plug into any system without custom-built integrations for each one.

MCP works alongside two other layers. Tools are atomic actions—the singular things AI can execute, like running a query, sending an email, or pressing a button. Skills are the knowledge layer—instructions that tell AI what tools to use, when, and why. Without skills, AI has tools but no judgment. Without tools, AI has knowledge but no ability to act.

Together, MCP servers, tools, and skills are what turn a conversational AI into something that can actually work inside an operation. As these standards mature and more software providers expose their systems through MCP, the cost and complexity of building AI-enabled workflows will drop dramatically. We are not fully there yet—but the direction is clear.

Infrastructure and data: the decisions your portcos need to make now

Before any AI deployment conversation begins, there is a more fundamental question to answer: where does your data go, and who can see it?

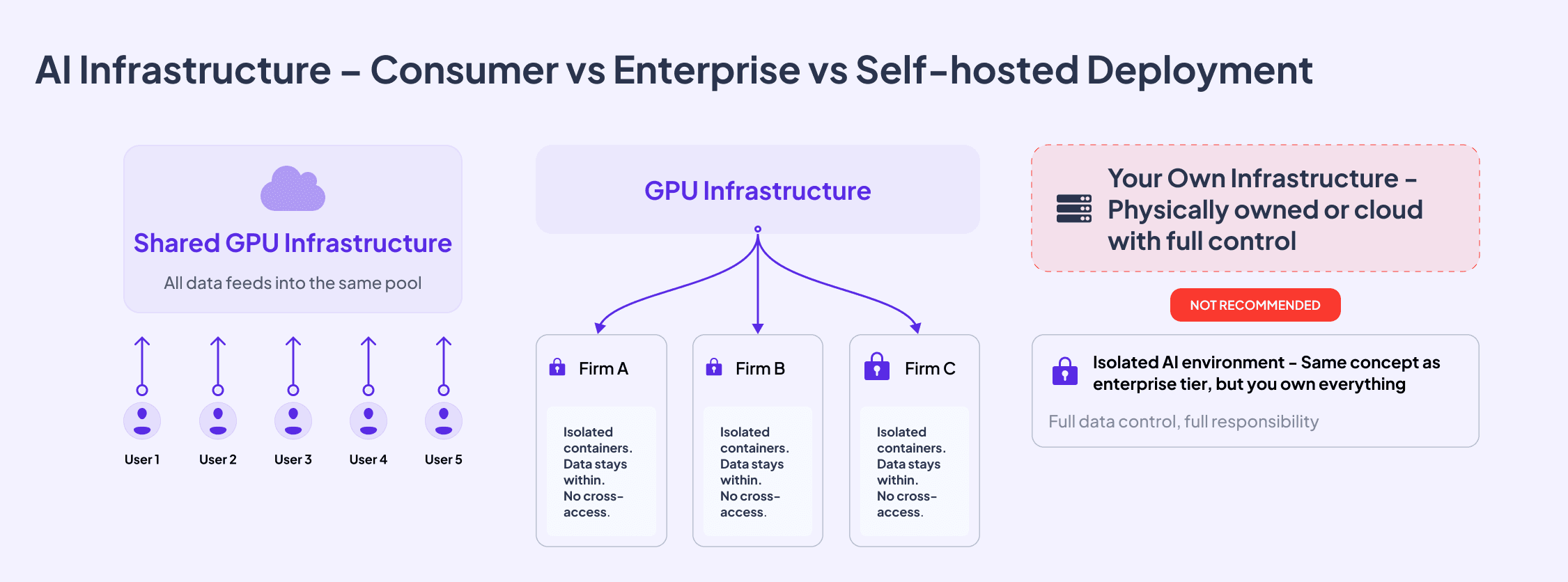

Most employees in portfolio companies are already using AI. They are using personal ChatGPT accounts, consumer-tier subscriptions, and free tools—for work tasks, with work data. If your portcos have not deployed enterprise-tier AI at the company level, this is almost certainly happening right now.

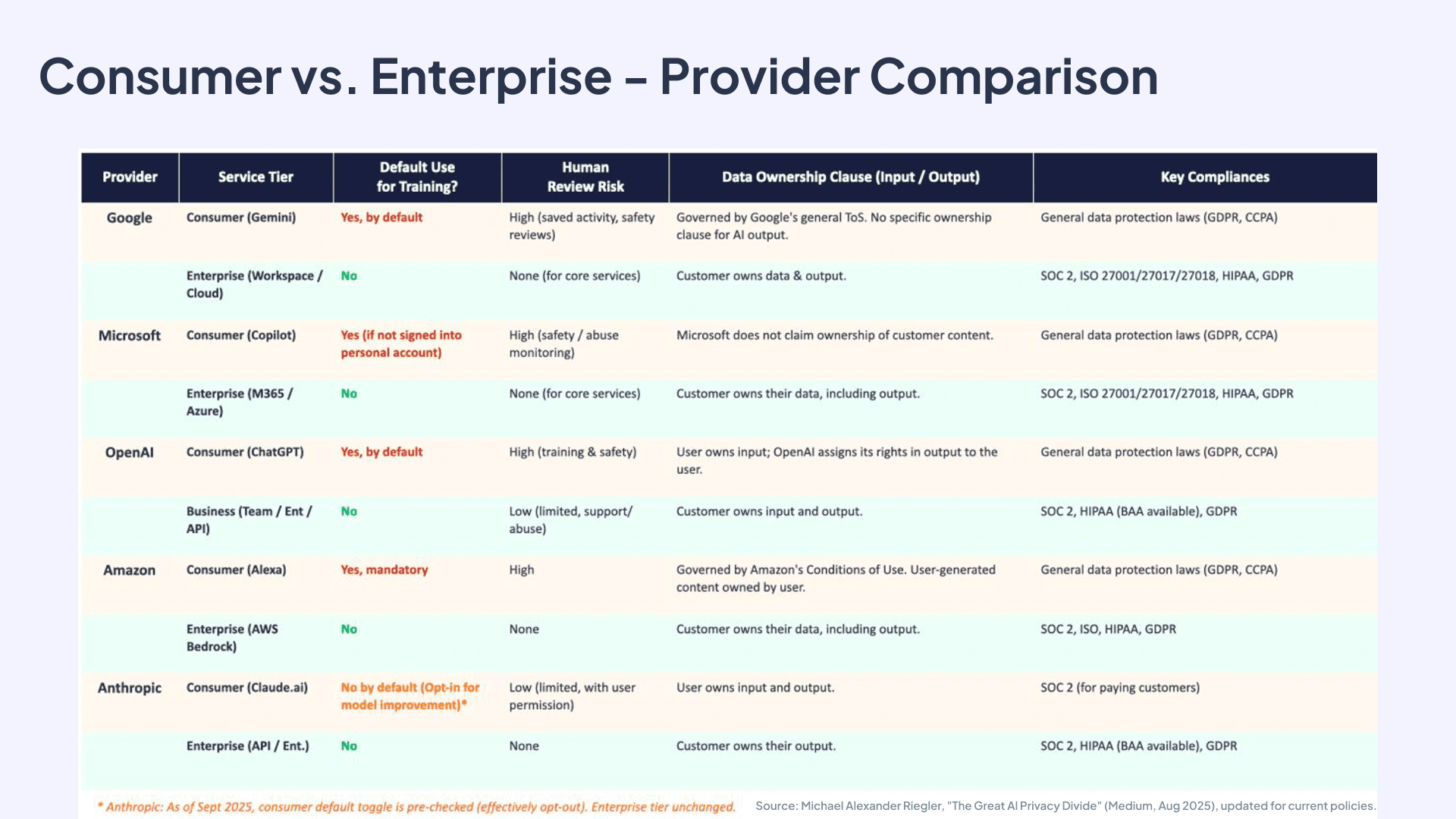

Consumer-tier deployments share infrastructure across all users. Your data feeds into the same pool as everyone else's, may be used for model training, and is covered only by the provider's standard privacy policy. There are no data processing agreements, no SOC 2 or ISO 27001 certifications, and limited or no audit rights.

Enterprise-tier deployments are fundamentally different. Your data sits in isolated containers, logically separated from other customers. It is not used for training. It is governed by contracts, data processing agreements, and formal compliance certifications. The analogy is Microsoft SharePoint or Google Workspace: you share infrastructure, but your data is yours.

A comparison across major providers—Google, Microsoft, OpenAI, Amazon, and Anthropic—shows the same pattern: consumer tier trains on your data by default; enterprise tier does not. The gap is significant.

The decision is simple: are you comfortable with consumer-level data protection on enterprise-level work? If yes, that is a legitimate choice—make it consciously. If not, the fix is not a technology project, but a procurement decision. Buy the enterprise licenses and distribute them.

There is a third deployment option—self-hosted, where you own and operate your own AI infrastructure. It offers full data control, but it comes with full responsibility: you maintain the models, operate the GPU clusters, and keep specialized engineering talent on staff. For most portfolio companies, the cost and complexity make this inadvisable.

Security concerns that will only grow

Deploying AI with access to company systems introduces a new category of security risk that deserves attention. AI agents that operate inside email, CRM, calendars, and financial systems create exposure if access is not scoped carefully.

Two risks are particularly worth flagging. First, prompt injection—where malicious instructions are embedded in content the AI agent reads (a supplier email, a document, a web page)—can cause the agent to act in unintended ways. Second, improperly scoped permissions mean that a compromise of one access point can cascade across everything the agent is connected to.

These are not theoretical concerns. They are the emerging cybersecurity attack vectors of the AI era, and they require the same kind of deliberate governance that companies apply to user access management today. Human-in-the-loop controls, audit logs, and confidence-scoring thresholds—where AI escalates to human review if it falls below a set certainty level—are practical mitigations that belong in every enterprise AI deployment.

Where productivity is heading

The cost of AI reasoning has dropped roughly 100x since 2023. What cost $10 per million tokens in early 2023 now costs around 10 cents. That price decline is not slowing—it's accelerating as new data centers come online and open-source models improve.

This matters operationally because it changes the ROI calculus on automation. Tasks that were not worth automating a year ago—because the AI processing cost outweighed the labor savings—now make financial sense. Constant monitoring, live analytics, and automated exception management are all becoming economically viable use cases.

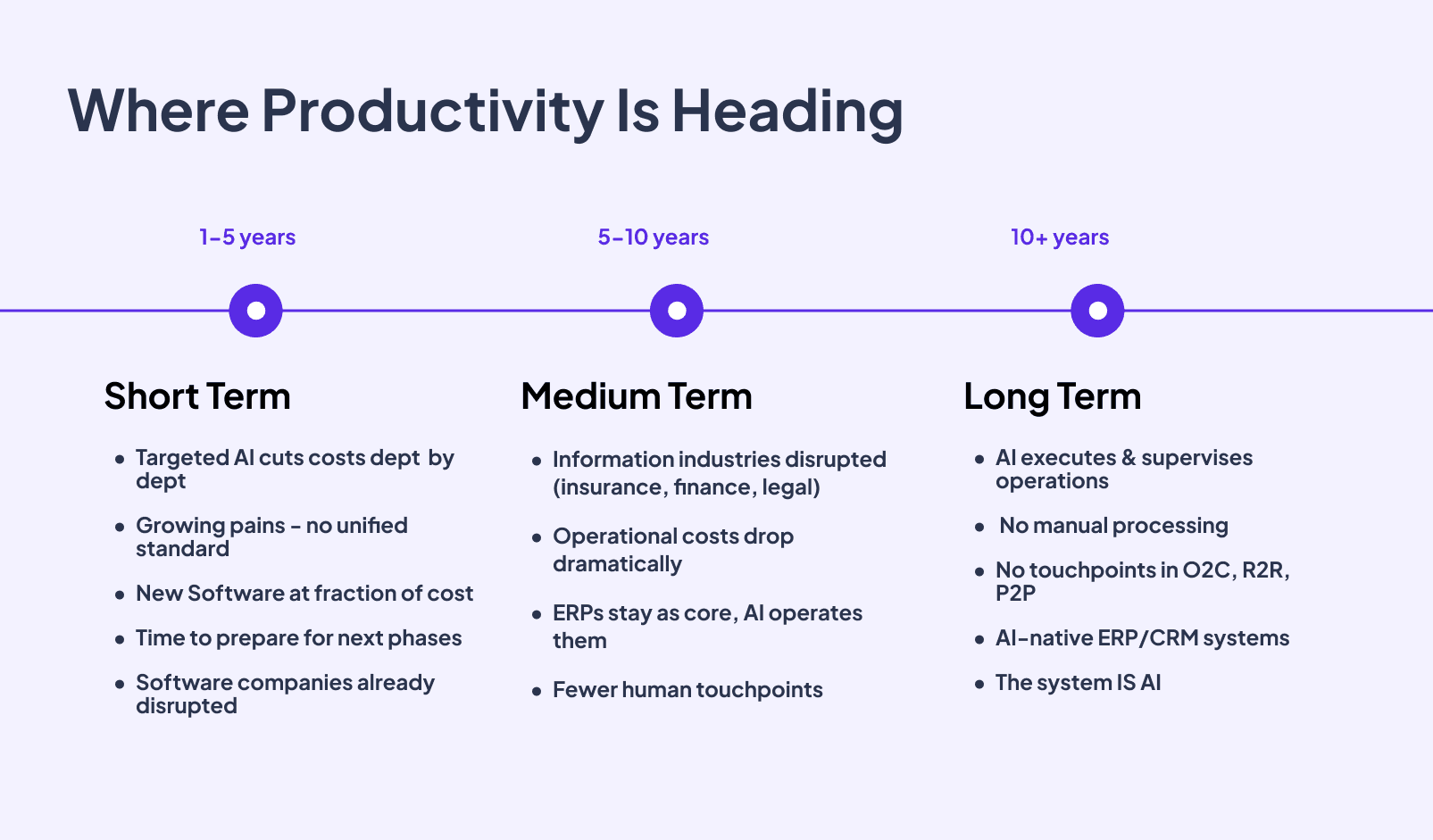

Here's how we see this playing out over three time horizons.

Short term (one to five years): Targeted AI implementations cut costs department by department. There is no unified standard yet, and implementations are mostly standalone—not connected across the full company. For manufacturing, logistics, and other physical-domain portcos, back-office functions are the primary automation opportunity. This is where you act now.

Medium term (five to 10 years): Information industries—insurance, finance, legal, consulting—face structural disruption, not just productivity improvement. Operational costs drop dramatically. ERPs and CRMs remain as core systems, but AI increasingly operates them rather than people. Software companies are already operating in this phase; their product development has been disrupted.

**Long term (10-plus years): **AI is not just operating systems. AI is the system. No manual processing. No human touchpoints in O2C, R2R, or P2P. AI-native ERP and CRM platforms emerge that hold business logic and integrity natively, not as a layer on top of existing software.

McKinsey offers a useful early signal. Their global managing partner disclosed in January 2026 that the firm now operates approximately 25,000 AI agents alongside 40,000 human employees—roughly 0.6 agents per person. The result: 25% of backend labor redeployed to client-facing roles, 10% output growth without headcount increases, and 1.5 million hours saved in search and synthesis work. Crucially, McKinsey noted it is now easier and faster to build an AI agent for back-office work than to hire and train a person for the same role.

That is where this is heading.

What's actually happening in AI implementations today

There is a significant gap between vendor demos and what is running in production. In our experience, 95% of live AI implementations follow the same pattern: traditional automation tools—RPA, workflow engines, low-code platforms—hand work to AI for reasoning and decision-making, then pull the output back. AI functions as the brain in the middle, not the orchestrator.

This is the opposite of how vendors present it. But it is what works reliably today. Full-scale MCP deployments across an entire company landscape, with AI autonomously orchestrating all processes, are not yet happening in production environments. Focused, bounded implementations are delivering real results.

An AI agent—properly defined—is reasoning capability plus a specific task plus execution capability. It is a specialist for one job, not a general-purpose employee replacement. The companies getting results are the ones treating AI that way.

What this looks like in practice: three deployment examples

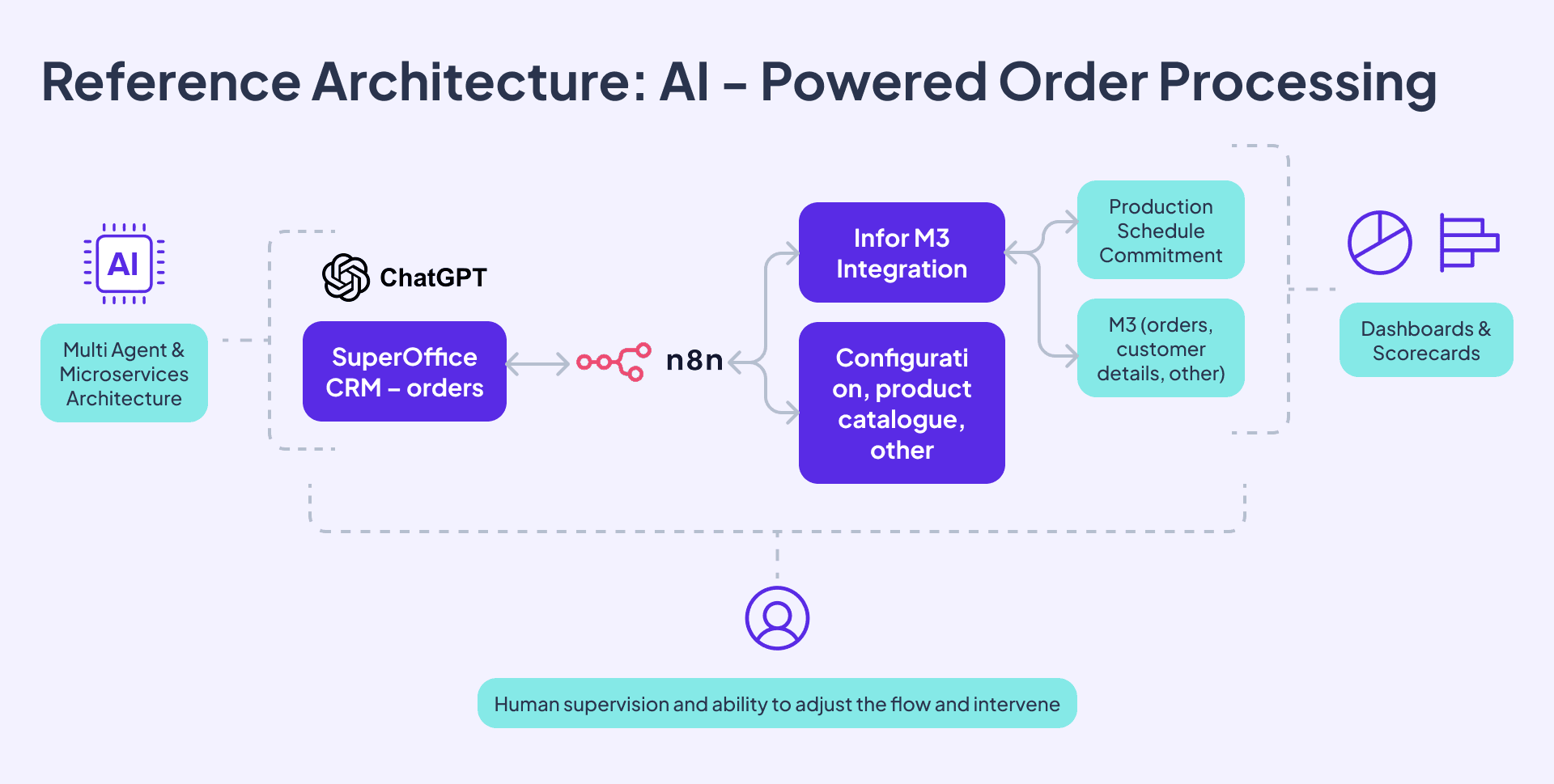

AI-powered order processing

One portfolio company had 63 people processing orders that arrived in unstructured formats—emails, PDFs, voice messages—with a two-day average lead time and constant production planning problems. We added an AI agent that reads incoming orders, structures them into a standardized repository, cross-references them against product configuration, stock levels, and customer payment terms, and passes validated orders through to production planning via RPA.

The result: 60-70% reduction in processing time, 80% reduction in customer service effort related to order errors, and a path to automating approximately 30 of those 63 FTEs—with a trajectory toward 80% automation as the system learns.

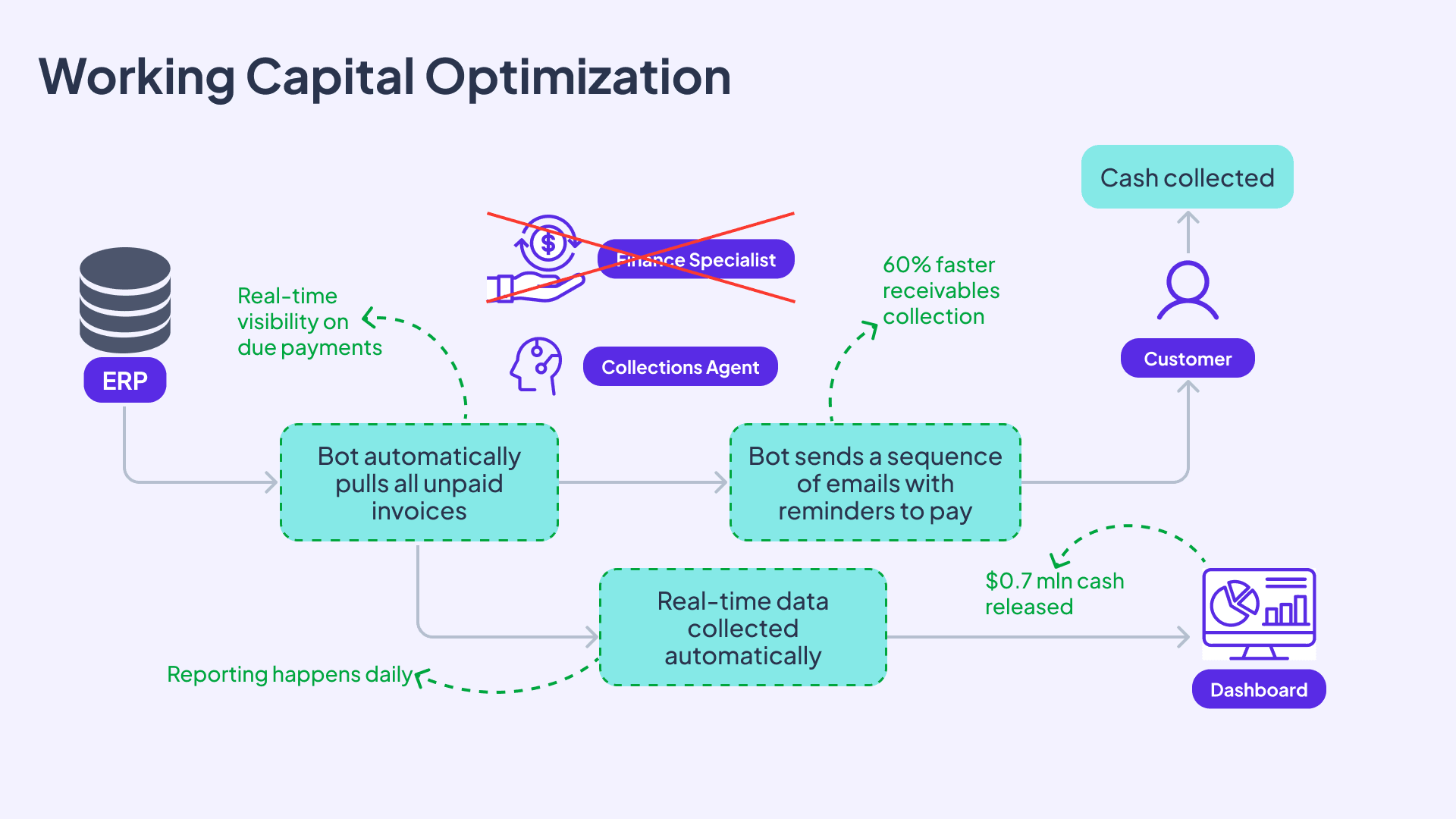

Working capital optimization through automated collections

A multi-market business was carrying $1.2 million in overdue invoices on average. Their finance team wanted to follow up with debtors systematically, but with tens of thousands of customers across 11 markets, manual outreach was not feasible.

A collections agent now automatically pulls unpaid invoices from the ERP, sends a structured sequence of reminder communications, and updates management dashboards in real time. In the UK market, the late receivables rate dropped from 25% to 7-8%—releasing $2.7 million in overdue amounts. In Poland, the unpaid balance dropped from $1 million to $350,000, with a four-day DSO reduction. ROI payback was under three months in both markets.

The solution was designed to scale: the client took it from two markets to all 11 themselves.

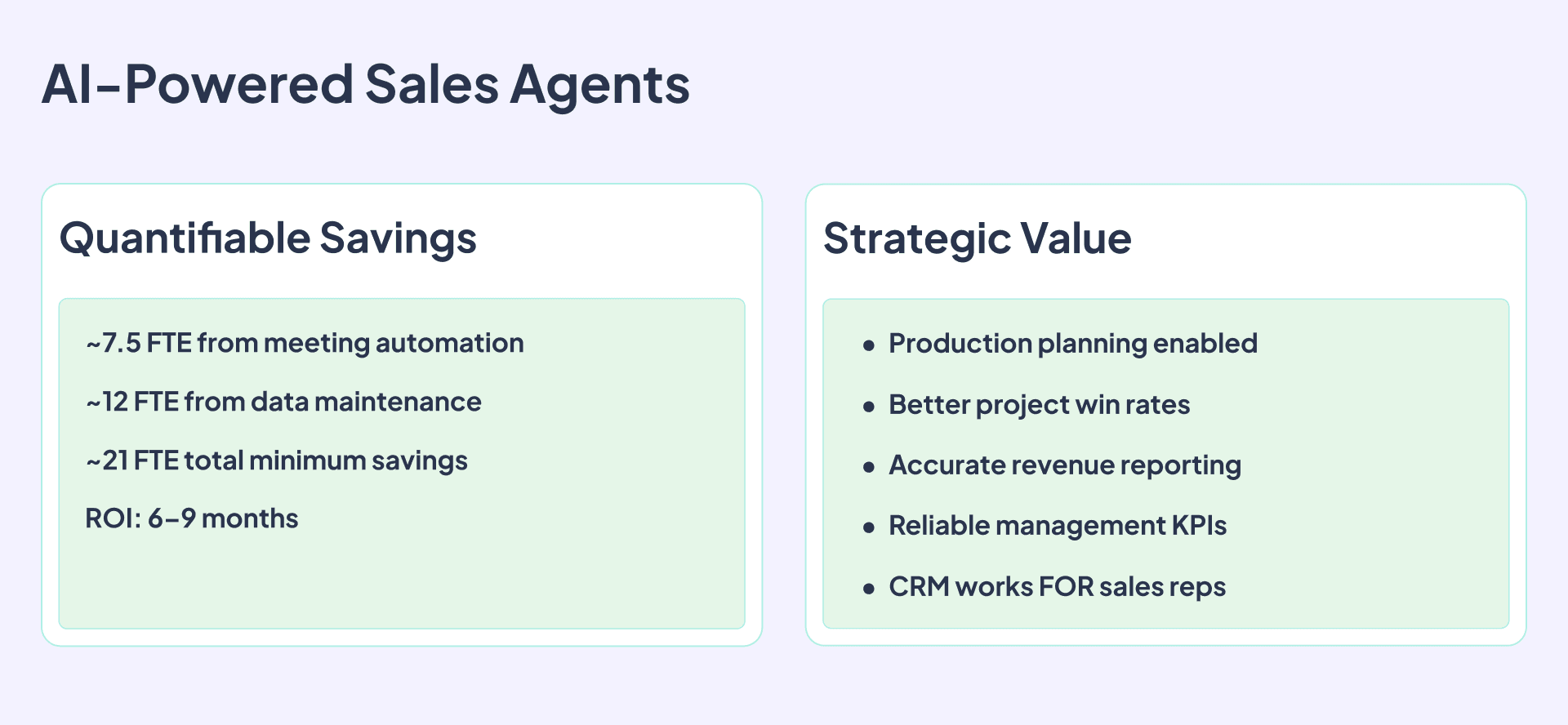

AI-powered internal sales agents

A manufacturing company with 130 sales reps had a CRM that virtually no one used. Only 30-40% of meetings were properly registered, 0% of the pipeline was reliable enough to use for production planning, and approximately 21 FTEs worth of time was being consumed by manual data entry and CRM admin.

The solution is being built in three phases: meeting administration automation (AI voice agents for notes, auto-registration in CRM), pipeline management (validation, expiry notifications, stakeholder tracking), and company and contact data enrichment.

Projected FTE savings are approximately 7.5 from Phase 1 and 12 from Phase 3 with a total of 21 FTEs equivalent to be routed towards selling, with a total investment of 110-150K EUR over six months. The strategic value is in the revenue line—better forecasting, higher win rates, and production planning that finally reflects actual demand.

The right starting point

The single biggest obstacle to AI adoption in portfolio companies is company inertia. Most portco leadership teams are not thinking about this, have not prioritized it, and are waiting for the picture to become clearer before committing. Meanwhile, competitors and disruptors are moving.

The path forward is not complicated. Assess where you stand—do your portcos have enterprise-tier AI subscriptions deployed? Does any process exist that is high-volume, rule-based, and time-consuming enough to be worth automating? Pick one starting point and run a pilot. A successful pilot changes the conversation internally more effectively than any strategy document.

The companies that build capability now—governance frameworks, enterprise AI subscriptions, and at least one proven automation in production—will be measurably better positioned when the medium-term disruption arrives. The companies that are still running manual processes across their back offices when AI cost curves hit their next inflection will face a structural disadvantage.

**The window to prepare is open. It will not stay that way. **